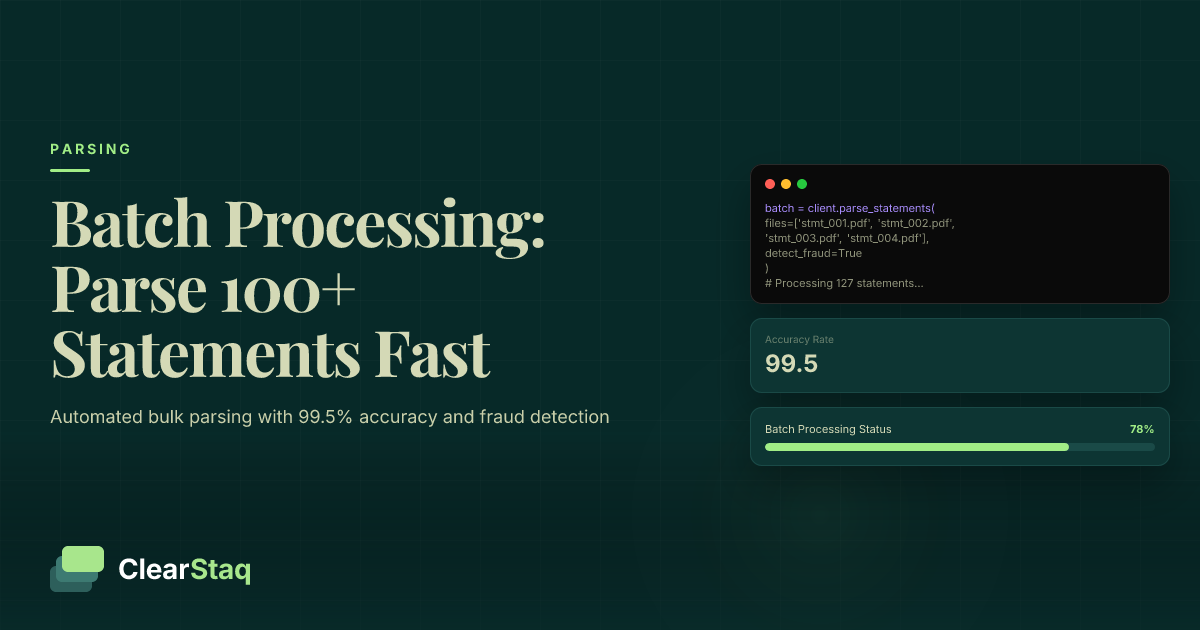

Batch bank statement processing allows you to parse 100+ bank statements simultaneously through automated systems, reducing processing time from hours to minutes. Modern APIs handle multiple file formats, provide real-time status tracking, and maintain 99.5% accuracy across large document volumes while applying fraud detection to every statement.

What you'll learn

- Batch processing reduces 100 bank statement processing time from 2-3 hours to 2-3 minutes

- Modern APIs support 900+ bank formats simultaneously in a single batch upload

- Real-time status tracking and webhook notifications provide complete visibility into processing progress

- Cost savings of 40-60% per document when using batch rates versus individual processing

- 99.5% accuracy maintained even at high volumes with fraud detection applied to every statement

Batch bank statement processing allows you to parse 100+ bank statements simultaneously through automated systems, reducing processing time from hours to minutes. Modern APIs handle multiple file formats, provide real-time status tracking, and maintain 99.5% accuracy across large document volumes while applying fraud detection to every statement.

What is Batch Bank Statement Processing?

Batch bank statement processing is the automated method of parsing multiple bank statements simultaneously rather than processing them one by one. Instead of uploading and waiting for each document individually, you submit an entire collection of statements that get processed in parallel through sophisticated parsing systems.

The core components that make batch processing possible include specialized API endpoints designed for multiple file uploads, intelligent queue management systems that distribute the workload, and parallel processing engines that can handle dozens of documents simultaneously. This technology has become essential for industries that deal with high volumes of financial documents—particularly MCA lenders processing hundreds of applications weekly, CPA firms managing multiple client portfolios, and banks conducting regular portfolio reviews.

Individual vs Batch Processing

The difference between individual and batch processing is stark. Processing 100 statements individually typically takes 2-3 hours of continuous work, assuming 1-2 minutes per document. With batch processing, those same 100 statements process in 2-3 minutes total. Resource efficiency improves dramatically too—batch processing uses server resources more effectively by maintaining consistent workload rather than starting and stopping for each document.

Error handling also differs significantly. Individual processing requires manual intervention for each problematic document, while batch systems automatically flag errors and continue processing the remaining documents. This means one corrupted file won't stop your entire workflow.

Who Needs Batch Processing

High-volume lenders represent the primary users of batch processing technology. MCA lenders reviewing 50-100 applications daily can't afford to process statements individually. The same applies to multi-client CPA firms during month-end closing when they need to analyze statements for dozens of clients simultaneously.

Portfolio managers monitoring existing loans also benefit significantly. Regular statement reviews for fraud detection or financial health monitoring become feasible when you can process an entire portfolio's statements in minutes rather than dedicating days to the task.

Benefits of Batch Processing vs Individual Processing

The advantages of batch processing extend well beyond simple time savings. While the speed improvement—processing 100 statements in 2-3 minutes versus 2-3 hours—is immediately obvious, the benefits compound across cost efficiency, resource optimization, and processing consistency.

Cost efficiency emerges from reduced API calls and lower processing fees. Many providers offer volume-based pricing that makes batch processing significantly cheaper per document. Resource optimization occurs because servers process documents continuously without the overhead of starting and stopping for each file. Most importantly, batch processing ensures uniform standards across all documents—every statement receives the same thorough analysis, including fraud detection and data validation.

Speed and Efficiency Gains

Processing time benchmarks reveal the true advantage of batch systems. A modern batch processor handles 100 standard bank statements in 2-3 minutes, achieving throughput of 30-50 documents per minute. Compare this to manual processing at 1-2 minutes per document, and the time savings become dramatic. Queue optimization further enhances efficiency by intelligently distributing documents across available processing resources.

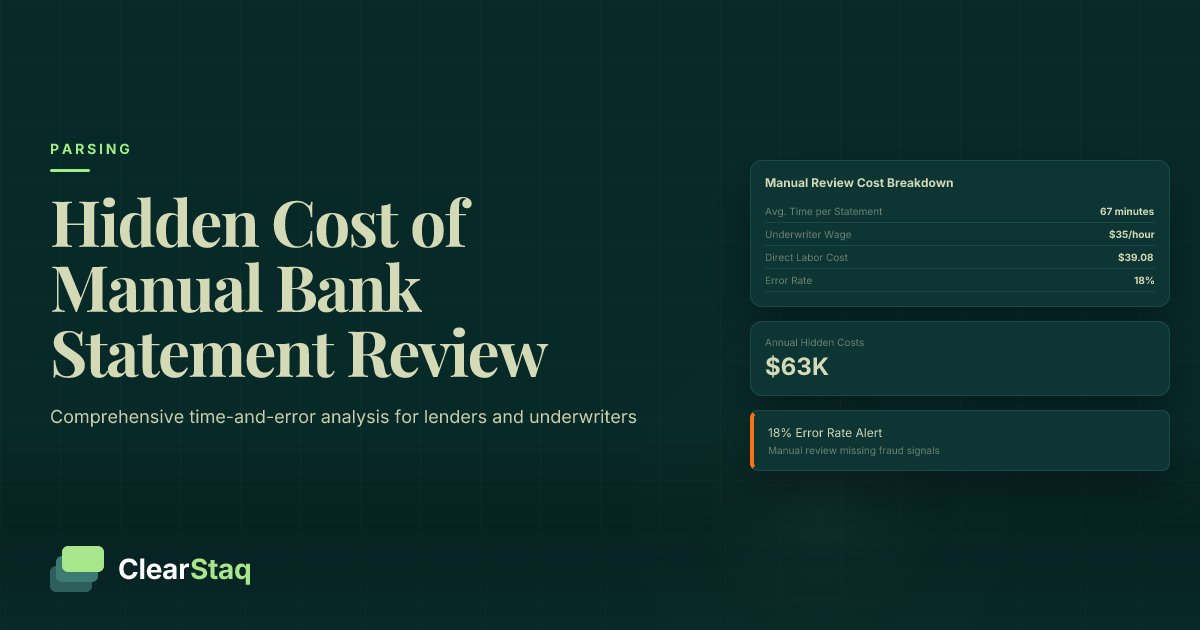

Cost Analysis

Per-document pricing typically drops 40-60% when using batch rates compared to individual processing. Infrastructure costs decrease because batch processing uses server resources more efficiently—one sustained processing session versus hundreds of individual requests. Staff time savings prove even more significant. A task that previously required a full-time employee for hours now completes automatically in minutes.

Accuracy Maintenance

Batch processing maintains quality through systematic approaches. Each document receives the same comprehensive bank statement parsing treatment, with consistent validation rules and fraud detection applied throughout. Error rates actually decrease in batch processing because the system applies uniform standards without the fatigue or inconsistency that affects manual processing. Quality control measures include automatic verification of extracted data and flagging of anomalies for human review.

How Batch Processing Works: The Technical Process

Understanding the technical process helps you implement batch processing effectively. The system begins with document ingestion, accepting multiple files through either multi-part form uploads or compressed archives. Modern systems support various upload methods to accommodate different workflows and file volumes.

Queue management forms the backbone of efficient batch processing. As documents arrive, the system validates each file, detects its format from 900+ bank formats, and distributes them across available processing nodes. Parallel processing enables simultaneous parsing of multiple documents, dramatically reducing overall processing time. Throughout this process, real-time status tracking keeps you informed of progress and any issues.

Upload Methods

Multi-part form data remains the most common upload method, allowing direct submission of multiple files through API calls. This method works well for moderate volumes up to 100-200 files. Zip file uploads handle larger batches more efficiently, compressing documents for faster transmission while maintaining organization through folder structures.

File size limitations vary by provider but typically allow individual files up to 50MB and batch uploads up to 1GB. These limits accommodate even the longest bank statements while preventing system overload.

Processing Pipeline

Document validation occurs first, checking each file for readability and format compatibility. The system then performs format detection, identifying which of 900+ supported bank formats each document uses. This automatic detection eliminates the need to pre-sort documents by bank.

Parallel extraction leverages multiple processing cores to parse documents simultaneously. A batch of 100 statements might process across 10-20 parallel threads, each handling multiple documents. Result aggregation combines the extracted data into a unified dataset, maintaining document relationships and processing metadata.

Status Monitoring

Real-time progress updates show overall batch completion percentage and individual document status. You'll see which documents are queued, processing, completed, or errored. Webhook notifications provide programmatic updates, enabling automated workflows that respond to completion events.

Error reporting includes detailed information about any processing failures, including the specific document, error type, and suggested remediation. This granular feedback enables quick resolution of issues without reprocessing the entire batch.

Step-by-Step Guide: Processing 100+ Statements

Successfully processing large batches requires proper preparation and execution. This guide walks through the complete process from file organization to results retrieval, ensuring smooth batch processing even for first-time users.

Preparation begins before you upload a single file. Proper organization and naming conventions prevent confusion and enable easier troubleshooting if issues arise. The upload process itself, whether through ClearStaq API or web interface, follows consistent patterns that ensure reliable processing.

File Preparation Best Practices

Implement consistent naming conventions that include relevant identifiers like client ID, date range, and bank name. For example: "ClientID_BankName_StartDate_EndDate.pdf" creates self-documenting filenames that simplify tracking. Organize files into logical groups—by client, date range, or processing priority—before beginning the upload.

Format optimization improves processing speed and accuracy. Ensure PDFs are text-based rather than scanned images when possible. Remove password protection before upload, as encrypted files require additional handling that slows batch processing.

Initiating Batch Processing

API request structure for batch uploads uses multipart form-data encoding. Include your authentication credentials in the header and configure parameters like webhook URLs for status updates, output format preferences, and any specific parsing rules.

Pro tip: Start with a small test batch of 5-10 documents to verify your configuration before processing hundreds of statements. This validates your setup without committing extensive processing resources.

Monitoring and Results

Status checking through polling or webhooks keeps you informed of progress. Poll the status endpoint every 10-30 seconds for small batches, or rely on webhooks for larger volumes. Progress tracking shows both overall completion percentage and individual document status.

Data retrieval options include downloading a consolidated dataset of all processed documents or accessing individual results. Most systems provide both JSON and CSV output formats, with CSV being ideal for direct import into spreadsheets or databases.

Process Your First Batch Free

Ready to process your first batch of bank statements? Start a free trial and upload up to 100 documents to see batch processing in action.

API Implementation for Batch Processing

Implementing batch processing via API provides maximum flexibility and automation potential. The key endpoints include batch upload for submitting documents and status tracking for monitoring progress. Understanding these endpoints and their proper usage ensures smooth integration with your existing workflows.

The batch upload endpoint accepts multiple files simultaneously, returning a batch ID for tracking. Status endpoints provide real-time updates on processing progress, while webhook implementation enables event-driven architectures that respond automatically to processing completion.

Batch Upload Endpoint

The request format uses POST with multipart/form-data encoding. Each file becomes a separate part in the request body, with metadata like filename preserved. Headers must include authentication tokens and content-type specifications.

File handling supports multiple selection methods: individual file parameters for smaller batches or array notation for larger sets. Parameter options include output format selection, webhook URLs for notifications, and processing priority for time-sensitive batches.

Status Tracking API

Progress monitoring endpoints return current batch status including total documents, completed count, processing count, and error count. Individual document status provides granular detail about each file's processing state and any errors encountered.

Completion notifications through the status API include summary statistics and links to download results. The response indicates whether all documents processed successfully or if manual review is needed for specific files.

{

"status": "success",

"fraud_score": 57,

"transactions": 47,

"bank": "Chase",

"processing_time_ms": 238

}Webhook Integration

Real-time updates via webhooks eliminate the need for constant polling. Configure a webhook endpoint to receive POST requests when batch status changes. Status change notifications include events like batch_started, document_completed, document_failed, and batch_completed.

Error handling through webhooks provides immediate notification of processing failures. The webhook payload includes error details, affected document identification, and suggested remediation steps.

Handling Errors and Edge Cases

Even well-prepared batches encounter occasional errors. Understanding common failure scenarios and implementing robust error handling ensures smooth operations. The key is building systems that gracefully handle failures without disrupting the entire batch.

Common failure scenarios include corrupted PDFs that can't be read, unsupported formats like handwritten statements, and timeout issues with extremely large files. Each error type requires different handling strategies, from automatic retry to manual intervention.

Error Types and Causes

File corruption occurs when PDFs are damaged during creation or transmission. These files typically fail immediately during validation. Format issues arise with non-standard bank statements or extremely old formats not in the system's library. Processing timeouts happen with unusually large files or complex statements requiring extensive analysis.

Authentication errors, though less common, occur when API credentials expire during long-running batch processes. Network interruptions can cause partial upload failures that require resume functionality.

Error Detection and Reporting

Individual document status tracking within batches enables precise error identification. Rather than failing an entire batch, the system continues processing valid documents while flagging problems. Error categorization helps prioritize resolution efforts—distinguishing between files that need reprocessing versus those requiring manual review.

Detailed error messages include specific failure points, whether during upload, validation, parsing, or data extraction. This granularity accelerates troubleshooting by pinpointing exact issues.

Recovery and Retry Logic

Automatic retry policies handle transient failures like temporary network issues or processing node unavailability. The system typically retries failed documents 2-3 times with exponential backoff before marking them as permanently failed.

Manual reprocessing options let you resubmit specific failed documents without affecting successfully processed files. Partial batch handling ensures that one problem document doesn't require reprocessing hundreds of successful statements, maintaining 99.5% parsing accuracy across the batch.

Performance Optimization and Best Practices

Optimizing batch processing performance requires attention to multiple factors. From batch sizing to security considerations, these best practices ensure maximum efficiency while maintaining accuracy and security standards.

The optimal batch size balances processing efficiency with manageable error handling. Too small, and you lose efficiency gains. Too large, and error resolution becomes unwieldy. Security remains paramount—batch processing must maintain the same fraud detection standards as individual processing.

Optimal Batch Configuration

Batch size recommendations vary by use case, but 50-200 documents per batch provides a good balance. This size maximizes parallel processing efficiency while keeping error handling manageable. Larger batches up to 1,000 documents work well for standardized statement sets with low error expectations.

Processing time optimization involves submitting batches during off-peak hours when system resources are most available. Consider time zones if using cloud services—submit batches when data center loads are lowest. Resource allocation improves when you group similar document types together, allowing the system to optimize parsing strategies.

File Organization Strategies

Naming conventions should include machine-readable elements that facilitate automated processing and error tracking. Include client IDs, date ranges, and bank identifiers in a consistent format. Size limitations require attention—split very large statements into smaller date ranges to stay within per-file limits.

Format consistency within batches improves processing speed. While systems handle mixed formats, grouping similar banks or statement types allows optimization of parsing routines.

Security and Fraud Detection

Maintaining fraud screening in batch processing requires systems that apply all fraud detection signals to every document. ClearStaq's batch processing applies the same 27 fraud signals to each statement, ensuring security doesn't suffer at scale.

Batch-level security includes encrypted uploads, secure storage during processing, and automated purging after completion. Compliance considerations for financial data require audit trails showing who submitted batches, when processing occurred, and what results were generated.

Real-World Use Cases and ROI

Understanding how organizations successfully implement batch processing provides practical insights for your own deployment. These real-world examples demonstrate measurable ROI and operational improvements across different industries.

MCA Lender Case Study

A mid-sized MCA lender processing 500+ applications weekly reduced their statement review time from 40 hours to 2 hours weekly. Volume handling improved from a maximum of 100 applications daily to over 200, without adding staff. Underwriting efficiency increased as analysts spent time on decision-making rather than data entry.

Processing time reduction proved dramatic—what previously took an entire team now runs automatically overnight. The lender processes all daily applications in a single batch each evening, with results ready for morning review.

CPA Firm Implementation

A regional CPA firm managing 200+ business clients implemented batch processing for monthly financial reviews. Their multi-client processing workflow now handles all client statements in under 30 minutes each month.

Monthly workflows transformed from a week-long process to a single morning task. Client service improvements included faster reporting turnaround and more time for advisory services rather than data processing.

ROI Analysis

Cost savings calculations show typical organizations save 60-80% on processing costs through batch automation. A company processing 1,000 statements monthly saves approximately $4,000 in labor costs alone. Time efficiency metrics demonstrate 50x speed improvements—tasks measured in days now complete in hours.

Accuracy improvements prove equally valuable. Automated batch processing eliminates data entry errors and ensures consistent application of validation rules. ClearStaq's batch processing capabilities maintain 99.5% accuracy even at high volumes, reducing costly errors and rework.

Frequently Asked Questions

How do you process multiple bank statements at once?

Use a batch processing API that accepts multiple files simultaneously via multipart form uploads or zip archives. The system processes all documents in parallel, providing real-time status updates and consolidated results.

How long does it take to parse 100+ bank statements?

With modern batch processing systems like ClearStaq, 100 bank statements typically process in 2-3 minutes. Processing time depends on document complexity, file sizes, and system capacity.

What file formats work best for batch processing?

PDF files work best for batch processing, though most systems support CSV, Excel, and various image formats. Consistent file naming and organization improve processing efficiency and error handling.

Can batch processing handle different bank formats simultaneously?

Yes, advanced systems like ClearStaq can process 900+ different bank formats in a single batch, automatically detecting and parsing each document according to its specific format requirements.

How do you track the status of batch processing jobs?

Most batch processing systems provide real-time status APIs and webhook notifications that report overall progress, individual document status, and completion notifications with detailed results.

Start Processing Statements in Batches Today

Stop processing bank statements one by one. ClearStaq's batch processing handles 100+ statements in minutes with 99.5% accuracy and complete fraud detection.

Frequently Asked Questions

How do you process multiple bank statements at once?

Use a batch processing API that accepts multiple files simultaneously via multipart form uploads or zip archives. The system processes all documents in parallel, providing real-time status updates and consolidated results.

How long does it take to parse 100+ bank statements?

With modern batch processing systems like ClearStaq, 100 bank statements typically process in 2-3 minutes. Processing time depends on document complexity, file sizes, and system capacity.

What file formats work best for batch processing?

PDF files work best for batch processing, though most systems support CSV, Excel, and various image formats. Consistent file naming and organization improve processing efficiency and error handling.

Can batch processing handle different bank formats simultaneously?

Yes, advanced systems like ClearStaq can process 900+ different bank formats in a single batch, automatically detecting and parsing each document according to its specific format requirements.

How do you track the status of batch processing jobs?

Most batch processing systems provide real-time status APIs and webhook notifications that report overall progress, individual document status, and completion notifications with detailed results.

ClearStaq Team

Product Team

The ClearStaq team builds AI-powered tools for bank statement parsing, fraud detection, and income verification.