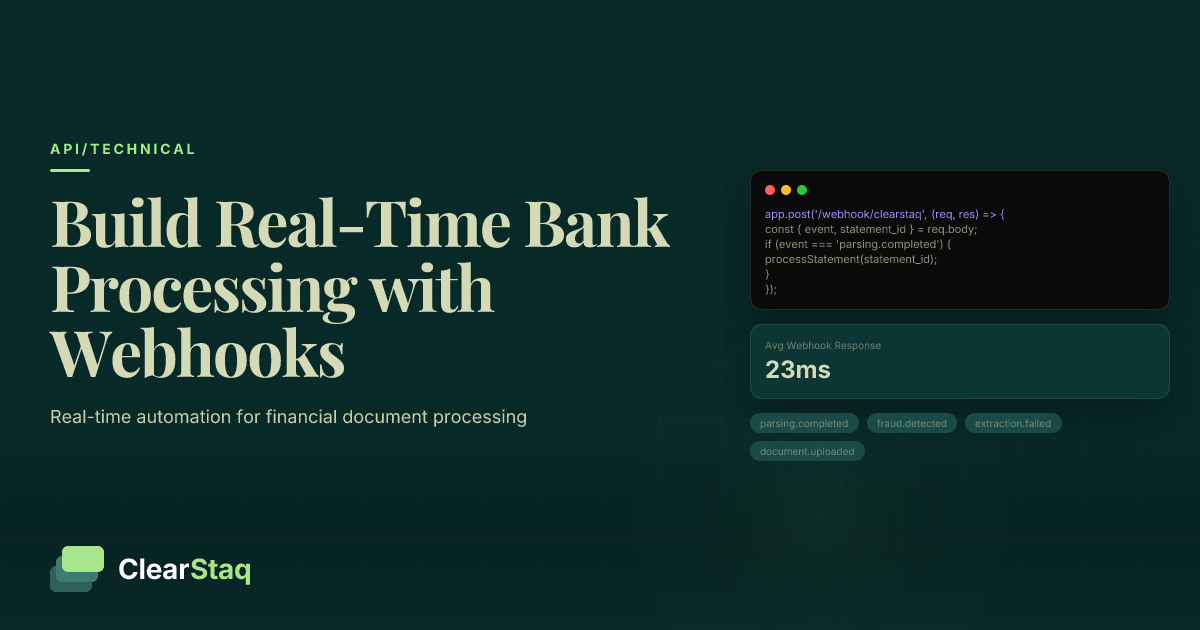

Webhook bank statement processing allows real-time automation by triggering downstream workflows the moment ClearStaq completes parsing or fraud analysis. This eliminates polling delays and enables instant processing of parsed data, fraud alerts, and extraction events through HTTP callbacks to your application endpoints.

What you'll learn

- Webhooks eliminate polling overhead and reduce API calls by 90% compared to traditional polling approaches

- Real-time fraud detection alerts enable immediate workflow triggers for suspicious bank statement patterns

- Complete processing pipelines integrate parsing events with downstream systems like underwriting and accounting platforms

- HMAC-SHA256 signature verification ensures secure webhook delivery with built-in replay attack protection

- Production-ready webhook handlers require proper error handling, retry logic, and idempotency checks

Webhook bank statement processing allows real-time automation by triggering downstream workflows the moment ClearStaq completes parsing or fraud analysis. This eliminates polling delays and enables instant processing of parsed data, fraud alerts, and extraction events through HTTP callbacks to your application endpoints.

What Are Webhooks in Document Processing?

Webhooks are HTTP callbacks that fire automatically when specific events occur in your document processing pipeline. Instead of constantly checking if a bank statement has finished parsing, webhooks push that information to your application the moment it's ready.

Think of webhooks as push notifications for your backend systems. When ClearStaq completes bank statement parsing, it sends an HTTP POST request to your designated endpoint with all the extracted data. This event-driven approach transforms how financial applications handle document processing.

Webhooks vs Polling for Bank Statement Processing

Traditional polling requires your application to repeatedly ask "Is the document ready yet?" This creates unnecessary API calls and delays. With a typical bank statement taking 2-5 seconds to parse, polling every second wastes resources and still introduces latency.

Webhooks flip this model. Your application receives instant notification when processing completes. Performance metrics show webhook-based systems reduce API calls by 90% and deliver results 3-5x faster than polling approaches. Resource efficiency improves dramatically since your servers only activate when there's actual work to process.

Real-time capabilities become possible with webhooks. Fraud detection alerts trigger immediate workflows. Parsing completion initiates downstream analysis without delay. This speed difference matters when processing thousands of documents daily or when time-sensitive decisions depend on the data.

Why Webhooks Matter for Financial Data

Financial workflows demand speed and reliability. Time-sensitive fraud detection can't wait for the next polling interval. A suspicious transaction pattern needs immediate attention, not discovery minutes later during a scheduled check.

Instant underwriting workflows depend on real-time data availability. When a loan application includes bank statements, every second of processing time affects the customer experience. Webhooks enable sub-second handoffs between parsing completion and risk assessment, keeping applicants engaged and reducing abandonment rates.

Compliance requirements often mandate audit trails with precise timestamps. Webhooks provide exact event timing, creating defensible records of when documents were received, processed, and analyzed. This granular tracking satisfies regulatory requirements that polling-based systems struggle to meet.

ClearStaq Webhook Architecture Overview

ClearStaq's webhook system centers on four primary event types that cover the complete document lifecycle. Each event carries specific payloads optimized for downstream processing, with built-in reliability features ensuring your critical financial workflows never miss an event.

The architecture prioritizes both speed and reliability. Events fire within milliseconds of completion, while retry mechanisms ensure delivery even if your endpoints temporarily fail. This dual focus on performance and resilience makes ClearStaq webhooks suitable for mission-critical financial applications.

Available Webhook Events

The parsing.completed event fires when bank statement extraction finishes successfully. This webhook includes the complete parsed dataset: transactions, balances, account details, and metadata. It's the primary event for triggering downstream financial analysis.

The fraud.detected event triggers when suspicious patterns exceed configured thresholds. This critical webhook includes fraud scores, specific fraud detection signals that triggered, and recommended actions. Financial institutions use this for immediate alert routing.

The document.processed event provides a summary notification when all processing stages complete. This higher-level event suits workflows that need completion confirmation without detailed data. The extraction.failed event handles error scenarios, enabling graceful degradation and manual review routing when automatic processing isn't possible.

Webhook Payload Structure

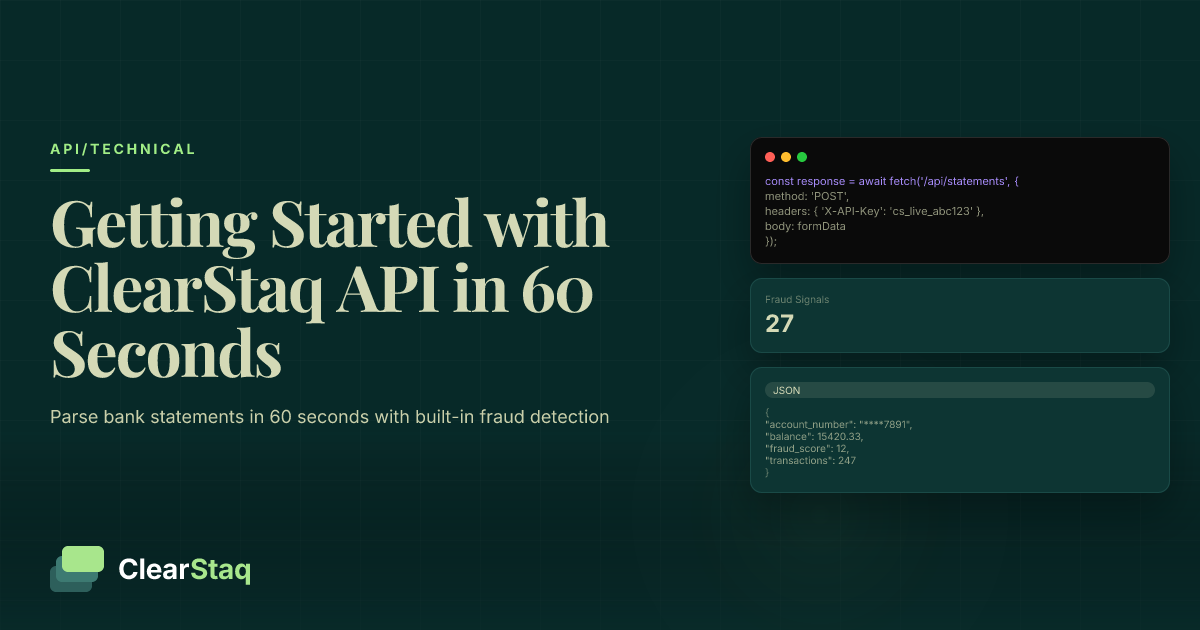

ClearStaq webhook payloads follow a consistent structure across all event types. Event metadata includes unique identifiers, timestamps, and event version numbers for proper handling. This standardization simplifies webhook processing logic across different event types.

Parsed data arrives in a normalized JSON structure. Transaction arrays include dates, descriptions, amounts, running balances, and categorization. Account information covers institution details, account numbers (masked for security), and statement period metadata. Each field uses consistent naming conventions matching the API documentation.

Fraud signal payloads provide actionable intelligence. Beyond simple scores, webhooks include specific signals triggered, confidence levels, and risk factors identified. This granular data enables sophisticated routing logic based on risk profiles and business rules.

Delivery and Retry Logic

ClearStaq implements exponential backoff for failed webhook deliveries. Initial retries happen after 5 seconds, then 25 seconds, then 125 seconds, continuing up to 24 hours. This pattern balances quick recovery from temporary failures with avoiding endpoint overload.

[api-request]Maximum retry attempts vary by webhook criticality. Fraud detection webhooks retry up to 50 times over 24 hours, ensuring critical alerts eventually reach their destination. Standard parsing completion events retry 25 times. After exhausting retries, events move to a dead letter queue accessible via the API for manual recovery.

Dead letter queues preserve failed events for 30 days. This safety net ensures no data loss even during extended outages. Your application can query and replay these events, maintaining data integrity across system failures.

Setting Up Your Webhook Endpoint

Creating a robust webhook endpoint requires attention to security, performance, and reliability. Your endpoint becomes a critical component of the document processing pipeline, handling sensitive financial data with stringent uptime requirements.

Before diving into code, understand that webhook endpoints differ from typical API endpoints. They must handle unpredictable traffic spikes, process quickly to avoid timeouts, and implement proper authentication. Getting these fundamentals right prevents future scaling issues.

Endpoint Requirements and Best Practices

HTTPS is mandatory for all webhook endpoints. ClearStaq refuses to send webhooks over unencrypted connections, protecting sensitive financial data in transit. Your SSL certificate must be valid and properly configured—self-signed certificates won't work.

Return 200 status codes immediately upon receiving the webhook. Process the actual data asynchronously to avoid timeout issues. ClearStaq expects responses within 10 seconds; longer processing times trigger unnecessary retries. A simple acknowledgment followed by background processing provides the best reliability.

Process within timeout limits by implementing the "acknowledge and queue" pattern. Your endpoint should validate the webhook signature, store the payload in a queue, and return 200. Actual processing happens asynchronously, preventing timeout-related failures and enabling better error handling.

Development Environment Setup

Using ngrok for local testing streamlines webhook development. This tool creates secure tunnels from ClearStaq to your local development server, enabling real-world webhook testing without deploying code. Install ngrok, start your local server, then run `ngrok http 3000` to generate a public HTTPS URL.

Staging webhook endpoints require careful configuration management. Create separate webhook URLs for development, staging, and production environments. Use environment variables to manage these URLs, preventing accidental webhook delivery to development servers from production systems.

Environment variables should include webhook URLs, signing secrets, and processing flags. A typical setup includes `CLEARSTAQ_WEBHOOK_URL`, `CLEARSTAQ_WEBHOOK_SECRET`, and `CLEARSTAQ_WEBHOOK_PROCESSING_ENABLED`. This configuration pattern scales across environments while maintaining security.

Configuring Webhooks in ClearStaq

Dashboard setup begins in the ClearStaq API settings. Navigate to the Webhooks section, where you'll find options for creating new webhook endpoints. Each endpoint can subscribe to specific events, allowing granular control over which notifications you receive.

Event selection determines which webhooks fire to each endpoint. Create focused endpoints that handle specific event types rather than one endpoint processing everything. This separation simplifies debugging, enables independent scaling, and improves error isolation.

URL configuration supports both static endpoints and those with dynamic path parameters. While `https://api.yourcompany.com/webhooks/clearstaq` works well, you can also use `https://api.yourcompany.com/webhooks/clearstaq/{customer_id}` for multi-tenant architectures. ClearStaq replaces template variables with actual values during delivery.

Handling ClearStaq Webhook Events

Effective webhook handling transforms raw events into business value. Each event type requires specific processing logic, error handling, and data validation. Building robust handlers prevents data loss and ensures reliable automation.

The key to successful webhook processing lies in understanding each event's purpose and payload structure. Parsing completion events drive most workflows, while fraud detection events require immediate attention. Treating each event type appropriately maximizes the value of your webhook integration.

Processing Parsing Completion Events

Accessing parsed transaction data starts with payload validation. The webhook includes a complete transaction array with dates, descriptions, amounts, and running balances. Verify data completeness before processing—missing required fields indicate potential parsing issues requiring investigation.

Handling extraction metadata provides context for the parsed data. Statement date ranges, account numbers, and institution details arrive in standardized formats. This metadata enables proper data association, ensuring transactions link to the correct accounts and time periods in your system.

Data validation should check for logical consistency. Running balances should calculate correctly from transaction amounts. Dates must fall within the statement period. Transaction counts should match summary statistics. These validations catch edge cases that pass parsing but contain logical errors.

Handling Fraud Detection Webhooks

Fraud score interpretation requires understanding ClearStaq's scoring model. Scores range from 0-100, with higher values indicating greater fraud risk. Establish threshold policies: scores above 80 typically warrant immediate investigation, 50-80 require additional verification, and below 50 can proceed with standard processing.

[parsing-flow]Alert workflows must balance automation with human oversight. High-risk alerts should trigger immediate notifications to fraud teams. Medium-risk events might pause automated processing pending review. Low-risk scores can proceed automatically with enhanced monitoring. This tiered approach optimizes resource allocation while maintaining security.

Risk-based routing enables sophisticated fraud prevention. Route high-risk applications to senior underwriters. Send medium-risk cases through additional verification steps. Process low-risk applications via standard automated workflows. This dynamic routing based on webhook fraud scores improves both security and efficiency.

Implementing Idempotency

Duplicate event detection prevents double-processing of webhooks. ClearStaq includes unique event IDs in every webhook. Store these IDs in a database or cache, checking for duplicates before processing. This simple check prevents duplicate transactions, double fraud alerts, and data corruption.

Event ID tracking requires persistent storage with appropriate retention. Redis works well for recent events, while PostgreSQL handles long-term storage. Implement a two-tier system: check Redis for recent duplicates (fast), then PostgreSQL for older events (comprehensive). This approach balances performance with completeness.

State management ensures consistent processing despite failures. Track webhook processing states: received, processing, completed, or failed. This state tracking enables resume capability after failures and provides clear audit trails. Use database transactions to update state atomically with business logic, maintaining consistency.

Building the Complete Processing Pipeline

A production-ready webhook pipeline orchestrates multiple components into a cohesive system. From initial document upload through final business logic execution, each step must handle both success and failure scenarios gracefully.

The end-to-end workflow begins when users upload bank statements to ClearStaq. Parsing begins immediately, triggering webhooks upon completion. Your pipeline receives these events, processes the data, and executes business logic like underwriting decisions or accounting entries. This seamless flow enables powerful automation.

Pipeline Architecture Design

Microservices architecture suits webhook processing well. Separate services handle webhook receipt, data validation, business logic, and integration with downstream systems. This separation enables independent scaling, focused testing, and easier maintenance. Each service owns a specific responsibility within the pipeline.

Queue systems provide reliable message passing between services. Amazon SQS, RabbitMQ, or Kafka work well for webhook payloads. The webhook endpoint writes to the queue and returns immediately. Processing services consume from queues at their own pace, providing natural backpressure handling and failure isolation.

Data flow patterns should minimize coupling between components. Event-driven architectures work particularly well. Each service publishes events upon completion, triggering downstream processing. This pattern enables easy pipeline modifications without changing existing components.

Database Integration Patterns

Storing webhook events provides audit trails and enables replay functionality. Create tables for webhook metadata (event ID, type, timestamp) and payload data (stored as JSONB in PostgreSQL). This dual structure balances query performance with flexibility.

[timeline-steps]Associating webhooks with documents requires careful key management. ClearStaq includes document IDs in webhook payloads. Use these as foreign keys to link webhook data with your document records. This association enables queries like "show all fraud alerts for this customer's documents."

Audit trails must capture complete processing history. Log webhook receipt, processing start, completion, and any errors. Include timing data for performance analysis. These comprehensive logs prove invaluable for debugging, compliance, and optimization efforts.

Connecting to Business Systems

CRM integration typically uses the parsing.completed webhook to update customer records. Extract key financial metrics from parsed statements—average balance, transaction volume, cash flow analysis—and sync to CRM fields. This automation eliminates manual data entry while ensuring CRM accuracy.

Underwriting platforms benefit from immediate data availability. Configure webhooks to trigger underwriting workflows upon parsing completion. The platform receives structured transaction data, enabling automated debt service coverage calculations, cash flow analysis, and risk scoring without manual intervention.

Accounting software connections require transaction-level integration. Map parsed transactions to chart of accounts categories. Create journal entries for reconciliation. Update cash position reports. Modern accounting APIs make this integration straightforward, turning bank statement webhooks into real-time bookkeeping automation.

Ready to build your webhook pipeline?

Explore our API documentation for complete webhook specifications and code examples.

Security and Authentication Best Practices

Webhook security protects against data tampering, replay attacks, and unauthorized access. Financial data requires the highest security standards—a single compromised webhook could expose sensitive information or enable fraud.

ClearStaq implements multiple security layers for webhook delivery. Understanding and properly implementing these security measures on your end ensures end-to-end protection of financial data throughout the webhook pipeline.

Signature Verification Implementation

HMAC-SHA256 verification confirms webhooks originate from ClearStaq. Each webhook includes a signature header calculated using your webhook secret and the request body. Recreate this signature and compare—mismatches indicate tampering or spoofing attempts.

Preventing replay attacks requires timestamp validation. ClearStaq includes timestamps in webhook signatures. Reject webhooks older than 5 minutes to prevent replay attacks. This time window allows for reasonable network delays while maintaining security.

Secret key management demands careful attention. Store webhook secrets in environment variables or secure key management systems—never in code. Rotate secrets quarterly or immediately if compromised. ClearStaq supports multiple active secrets during rotation, ensuring zero-downtime updates.

Network Security Considerations

HTTPS enforcement starts at the network edge. Configure load balancers to reject non-HTTPS connections entirely. Use modern TLS versions (1.2 or higher) and strong cipher suites. Regular security scans ensure your HTTPS configuration remains robust against evolving threats.

IP restrictions add another security layer. ClearStaq publishes webhook source IP ranges. Configure firewalls to only accept webhook traffic from these IPs. This network-level filtering stops many attacks before they reach your application.

Firewall configuration should implement defense in depth. Beyond IP restrictions, limit webhook endpoints to specific ports and paths. Rate limit incoming connections to prevent denial-of-service attacks. Monitor firewall logs for suspicious patterns indicating potential attacks.

Data Security and Compliance

PCI DSS considerations apply when handling financial data via webhooks. Although bank statement data isn't payment card data, similar security principles apply. Encrypt data at rest, limit access on need-to-know basis, and maintain comprehensive audit logs.

Data retention policies must balance compliance with storage costs. Keep webhook payloads for the minimum required period—typically 7 years for financial records. Implement automated archival to cold storage after 90 days. Delete data beyond retention requirements to minimize breach exposure.

Encryption at rest protects stored webhook data. Use database-level encryption for webhook storage. Encrypt queue messages containing webhook payloads. Even application logs should mask sensitive fields. This comprehensive encryption ensures data remains protected throughout its lifecycle.

Error Handling and Retry Logic

Robust error handling differentiates production-ready webhook implementations from prototypes. Networks fail, databases go down, and services experience outages. Your webhook pipeline must handle these failures gracefully.

Understanding both ClearStaq's retry behavior and implementing your own error handling creates a resilient system. This dual-layer approach ensures critical financial data processing continues despite individual component failures.

ClearStaq Retry Behavior

Exponential backoff timing prevents overwhelming struggling endpoints. Retries occur at 5 seconds, 25 seconds, 125 seconds, and continue doubling up to 24 hours. This pattern quickly recovers from brief outages while avoiding excessive load during extended failures.

Maximum retry attempts vary by event criticality. Fraud detection webhooks—the most critical—retry 50 times over 24 hours. Standard parsing webhooks retry 25 times. Failed extraction events retry 10 times. This tiered approach focuses retry efforts on the most important events.

Failure conditions that trigger retries include network timeouts, 5xx status codes, and connection failures. ClearStaq doesn't retry 4xx errors except 429 (rate limit). Understanding these conditions helps design appropriate error responses from your endpoints.

Client-Side Error Handling

Graceful degradation keeps your application functional during webhook failures. Design workflows that can proceed with degraded functionality when real-time data isn't available. Queue documents for manual review. Send notifications about processing delays. Maintain service availability despite webhook issues.

[alert-feed]Circuit breakers prevent cascading failures in your webhook pipeline. If a downstream service fails repeatedly, temporarily stop forwarding webhooks to it. This prevents queue buildup and protects healthy services. Gradually re-enable the service to test recovery.

Dead letter processing handles webhooks that fail all retry attempts. Create a separate queue for failed webhooks. Build tools for operators to inspect, modify, and replay these events. This manual recovery option ensures no permanent data loss from temporary failures.

Monitoring and Alerting

Webhook delivery metrics provide critical operational visibility. Track success rates, processing times, and retry counts. Sudden changes in these metrics often indicate problems before users notice. Dashboard these metrics for real-time operational awareness.

Error rate monitoring requires nuanced thresholds. Expect baseline error rates around 0.1% from network issues. Alert when rates exceed 1% for potential endpoint problems. Urgent alerts trigger above 5%, indicating serious issues requiring immediate attention.

Alert thresholds should consider business impact. Fraud detection webhook failures warrant immediate paging. Parsing completion failures during business hours need quick attention. Failed extraction events for rarely-used formats might only require daily review. Match alerting urgency to business criticality.

Testing Your Webhook Integration

Comprehensive testing ensures your webhook pipeline handles both happy paths and edge cases. Testing webhooks presents unique challenges—external triggers, network dependencies, and asynchronous processing complicate traditional testing approaches.

A solid testing strategy covers unit tests for individual components, integration tests for the complete pipeline, and load tests for production readiness. Each testing level validates different aspects of your webhook implementation.

Local Development Testing

ngrok setup enables realistic webhook testing from your development machine. Install ngrok, start your application locally, then run `ngrok http 3000` to create a public HTTPS tunnel. Update ClearStaq webhook configuration with the ngrok URL for immediate local testing.

Mock webhook servers simulate ClearStaq webhooks for unit testing. Create test fixtures with sample webhook payloads. Build a simple HTTP server that sends these payloads to your endpoint. This approach enables rapid testing without depending on actual document processing.

Unit testing webhooks requires mocking external dependencies. Test signature verification with valid and invalid signatures. Verify idempotency by sending duplicate events. Ensure error handling works by simulating database failures. These focused tests catch bugs early in development.

Integration Testing Strategies

End-to-end test flows validate the complete pipeline. Upload a test document, wait for the webhook, verify data flows through all systems correctly. These tests catch integration issues between components that unit tests miss.

Webhook replay testing ensures reliability. Save production webhook payloads (with sensitive data masked) as test fixtures. Replay these through your pipeline to verify continued compatibility. This regression testing catches breaking changes early.

Load testing reveals scaling limits. Use tools like Apache JMeter or k6 to simulate high webhook volumes. Start with expected production load, then increase until the system fails. This testing identifies bottlenecks before they impact production.

Production Validation

Canary deployments minimize risk when updating webhook handlers. Route 5% of webhooks to the new version initially. Monitor error rates and performance metrics. Gradually increase traffic percentage as confidence grows. This approach limits blast radius of potential issues.

Health checks ensure webhook endpoints remain available. Implement a `/health` endpoint that verifies database connectivity, queue access, and other dependencies. Monitor this endpoint externally. Automated systems can route webhooks away from unhealthy instances.

Rollback procedures must be quick and reliable. Keep the previous webhook handler version deployed but inactive. If issues arise, update load balancer rules to route traffic back. This immediate rollback capability minimizes downtime during problems.

Performance Optimization Tips

Optimizing webhook performance improves reliability and reduces costs. Small improvements in processing efficiency compound when handling thousands of daily webhooks. Focus optimization efforts on the most frequent code paths for maximum impact.

Performance optimization must balance speed with reliability. The fastest webhook handler isn't useful if it drops events or corrupts data. Measure performance holistically—considering throughput, latency, error rates, and resource usage together.

Endpoint Performance Optimization

Response time targets should aim for under 100ms. ClearStaq expects responses within 10 seconds, but faster responses improve overall system throughput. Achieve this by immediately queuing webhooks for async processing rather than handling synchronously.

Async processing patterns separate webhook receipt from business logic. Use message queues, background job processors, or event streams. This separation allows webhook endpoints to scale independently from processing logic. It also improves fault isolation—processing failures don't impact webhook receipt.

Resource allocation requires careful tuning. Webhook endpoints need minimal CPU but fast network I/O. Processing workers need more CPU and memory. Database connections become bottlenecks at scale. Profile your pipeline to identify resource constraints and scale appropriately.

High-Volume Processing Strategies

Queue management becomes critical at scale. Implement separate queues for different event types, allowing independent scaling and prioritization. Use queue depth monitoring to trigger automatic scaling. Dead letter queues isolate problem events without blocking healthy processing.

Horizontal scaling handles increasing webhook volumes better than vertical scaling. Add more webhook endpoint instances behind a load balancer. Scale processing workers based on queue depth. This approach provides better fault tolerance and easier capacity planning.

Load balancing should consider webhook type and customer tier. Route fraud detection webhooks to dedicated high-priority endpoints. Separate endpoints for high-volume customers prevent them from impacting others. This intelligent routing improves overall system reliability.

Database and Storage Optimization

Indexing strategies make or break webhook query performance. Index webhook event IDs for duplicate detection. Create composite indexes on timestamp and event type for analytics queries. Monitor slow query logs to identify missing indexes as usage patterns evolve.

Data partitioning manages growing webhook datasets. Partition by date for time-series queries. Archive old partitions to cheaper storage. This approach maintains query performance while controlling costs as data volumes grow.

Archive policies balance accessibility with cost. Keep recent webhooks (30 days) in hot storage for quick access. Move older data to warm storage with 99.5% parsing accuracy records. Archive ancient data to cold storage for compliance. This tiered approach optimizes both performance and cost.

Real-World Implementation Examples

Seeing webhook pipelines in production contexts clarifies implementation patterns. These examples showcase different architectural approaches suited to various business requirements and scales.

Each implementation prioritizes different aspects—speed, reliability, or flexibility—based on business needs. Understanding these tradeoffs helps design pipelines matching your specific requirements.

MCA Underwriting Pipeline

Automated risk scoring begins immediately upon webhook receipt. The parsing.completed webhook triggers a cascade of analysis: daily balance calculations, cash flow trends, and revenue estimation. These metrics feed into proprietary risk models producing instant underwriting decisions.

Decision workflows route applications based on risk scores. High-confidence approvals proceed to automated offer generation. Borderline cases route to underwriter queues with pre-calculated metrics. Obvious declines receive immediate polite rejections. This automation handles 70% of applications without human intervention.

Portfolio management aggregates webhook data across all customers. Track approval rates, default predictions, and portfolio concentration by industry. Webhook-driven analytics provide real-time portfolio health visibility, enabling dynamic risk policy adjustments.

Accounting Automation Workflow

QuickBooks integration maps parsed transactions to chart of accounts. Natural language processing categorizes transactions based on descriptions. Machine learning improves categorization accuracy over time. The result: automated bookkeeping from bank statement uploads.

Expense categorization uses both rule-based and ML approaches. Common vendors map to specific categories via rules. Unknown transactions use ML models trained on historical categorizations. Human review of low-confidence categorizations continuously improves the system.

Reconciliation automation matches bank transactions with accounting records. Webhooks trigger immediate matching attempts. Successful matches clear automatically. Discrepancies route to exception queues for human review. This automation reduces month-end close time by 80%.

Fraud Alert System

Real-time notifications ensure rapid fraud response. High-risk webhooks trigger immediate SMS and email alerts to fraud teams. Medium-risk events create dashboard notifications. Integration with case management systems ensures proper investigation tracking.

Risk thresholds adapt based on customer profiles and historical data. New customers face stricter thresholds until establishing history. Regular customers with clean records enjoy relaxed monitoring. This dynamic approach balances security with customer experience.

Investigation workflows launched by webhooks streamline fraud response. Automated case creation includes all relevant data from webhooks. Investigators see transaction patterns, risk scores, and historical comparisons in unified dashboards. This integration reduces investigation time from hours to minutes.

The ClearStaq API platform provides additional tools for building sophisticated webhook integrations. From development sandboxes to production monitoring, the platform supports the complete webhook lifecycle.

Frequently Asked Questions

How do webhooks work for document processing?

Webhooks send HTTP POST requests to your endpoint when document processing events occur (parsing complete, fraud detected). This eliminates the need to poll for results and enables real-time automation of downstream workflows.

What events does ClearStaq trigger via webhooks?

ClearStaq triggers webhooks for parsing completion, fraud detection alerts, extraction failures, and document processing status updates. Each webhook includes relevant data like parsed transactions or fraud scores.

How do you handle webhook authentication and security?

ClearStaq webhooks use HMAC-SHA256 signature verification to ensure authenticity. Always validate signatures, use HTTPS endpoints, and implement proper error handling with idempotency checks.

What are the performance benefits of webhook-based pipelines?

Webhook pipelines eliminate polling overhead, reduce API calls by up to 90%, enable sub-second response times, and scale automatically with document volume without constant resource consumption.

How do you test webhook integrations during development?

Use ngrok to expose local endpoints for testing, implement webhook replay functionality, create comprehensive integration tests, and validate signature verification before production deployment.

Start Building Real-Time Processing Workflows

Start building real-time bank statement processing workflows today. Our webhook system handles millions of documents with 99.9% delivery reliability.

Frequently Asked Questions

How do webhooks work for document processing?

Webhooks send HTTP POST requests to your endpoint when document processing events occur (parsing complete, fraud detected). This eliminates the need to poll for results and enables real-time automation of downstream workflows.

What events does ClearStaq trigger via webhooks?

ClearStaq triggers webhooks for parsing completion, fraud detection alerts, extraction failures, and document processing status updates. Each webhook includes relevant data like parsed transactions or fraud scores.

How do you handle webhook authentication and security?

ClearStaq webhooks use HMAC-SHA256 signature verification to ensure authenticity. Always validate signatures, use HTTPS endpoints, and implement proper error handling with idempotency checks.

What are the performance benefits of webhook-based pipelines?

Webhook pipelines eliminate polling overhead, reduce API calls by up to 90%, enable sub-second response times, and scale automatically with document volume without constant resource consumption.

How do you test webhook integrations during development?

Use ngrok to expose local endpoints for testing, implement webhook replay functionality, create comprehensive integration tests, and validate signature verification before production deployment.

ClearStaq Team

Engineering Team

The ClearStaq team builds AI-powered tools for bank statement parsing, fraud detection, and income verification.